A story of one Dockerfile

The story below describes a progressive work on one Dockerfile. You will face a few real-life situations when a Docker build process was not trivial. We will touch on performance, security, and workflow optimization scopes.

When you read these use cases, ask yourself about each one: What would I do? What is the best practice here? Do I see any issues?

For each situation, I provide an answer, which, in my experience, uncovers the best practice to solve the challenge within the Dockerfile. You’re invited to compare our solutions!

Сhallenge 1

A junior DevOps engineer, let’s call him James, teamed to support Python developers. His first task was a dockerization of a Python application. He found out that the developers are already using this Dockerfile:

FROM python:3.7

COPY ./requirements.txt .

RUN pip install -r requirements.txt

COPY . .

EXPOSE 8081 8082 8083

CMD [“./app.py”]James started checking if he could optimize it. He saw that the COPY command is used twice. Since each instruction in Dockerfile is a layer, James decided he could reduce the number of layers. Thus, he rewrote the Dockerfile this way:

FROM python:3.7

COPY . .

RUN pip install -r requirements.txt

EXPOSE 8081 8082 8083

CMD [“./app.py”]There is one layer less now. Also, having fewer commands results in improved readability.

He is a Docker Hero, isn’t he?

Show answerAnswer

Not exactly.

James wanted to optimize the Dockerfile’s readability, but he also slowed the developers’ productivity.

Developers divided this COPY command into two different layers purposely – one before the requirements installation, and one after that. This way they can use Docker caching and save their time on rebuilds.

Docker stores the image as layers when each layer relies on the previous ones. The layer that was not changed can be reused from the existing image built before. However, if one of the layers is changed, Docker will rebuild it and all the following layers, although the last ones weren’t changed.

The requirements file usually changes much less frequently than Python code, so, the obvious use case is that the Python code has changed, but the requirements file has not. That’s why in the original Dockerfile, the pip install layer will be taken from the cache, without rebuilding, but in the “optimized” Dockerfile it will be rebuilt although it hasn’t changed.

However, the updated Dockerfile can be used when no Docker caching is used, for example in CI.

Hide answerСhallenge 2

When reviewing the repo, James caught sight that the requirements file contains a private token:

> cat requirements.txt

git+https://__token__:glpat-4HeUio8dHeaWnNg6@gitlab.com/acme-apps/config-repo.git

…James was unpleasantly surprised by this security breach.

In the beginning, he only wanted to fix this vulnerability in the public Docker image, so he updated the Dockerfile this way:

RUN pip install -r requirements.txt

RUN rm requirements.txtHowever, he still wasn’t happy having a plain secret stored in git. So he did the following:

He replaced the token with a placeholder this way:

git+https://__token__:GIT_TOKEN@gitlab.com/acme-apps/config-repo.gitThen he stored the token in a vault, pulled it in CI/CD Build stage, and replaced the placeholder with sed command before building the Dockerfile:

TOKEN=$(<pull token from a vault>)

sed -i 's/GIT_TOKEN/${TOKEN}/' requirements.txt

docker build .He is a Docker Hero, isn’t he? The docker image is secure now, isn’t it?

Show answerAnswer

Not exactly. Reminder – Docker stores the images as layers. The files that are removed in Dockerfile instructions, still exist in previous layers.

Would you like to be sure?

A quick workshop for a junior hacker:

Let’s create a secret file, then let’s copy it into a Docker image with COPY command, and delete it in the next instruction:

echo MY_SECRET_CODE_12345 > secret.txt

echo -e 'FROM busybox\nCOPY secret.txt .\nRUN rm secret.txt' > Dockerfile

docker build -t myimage .We have now a Docker image myimage with a removed secret.txt secret file.

Now, let’s run the docker save command to convert this Docker image into an archive:

docker save myimage -o myimage.tarUnpack myimage.tar, and you will see the folder with subfolders when each one of the subfolders represents the Docker layer. The subfolder that represents a COPY layer contains a tar archive with secret.txt inside.

Сhallenge 3

As an alternative solution, James used build arguments.

He added this instruction at the beginning of the Dockerfile:

ARG GITLAB_TOKENand changed the requirements file accordingly:

git+https://__token__:${GITLAB_TOKEN}@gitlab.com/acme-apps/config-repo.gitAnd CI command is now:

docker build --build-arg GITLAB_TOKEN=$(<pull the token from a vault>) .No secret is stored in Git and in the image. James is calm and happy now.

Finally, he is the Docker Hero, isn’t he? Is the docker image finally secure?

Show answerAnswer

Unfortunately, no.

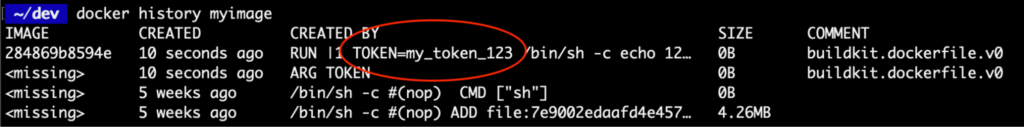

It looks like James didn’t ever hear about the docker history command.

This command makes build-time variables visible to any user of the image:

Here is a very short example:

echo -e 'FROM busybox\nARG TOKEN\nRUN echo 123' | docker build -t myimage --build-arg TOKEN=my_token_123 -

docker history myimage

<Frustration>

So what should James do? There are a few best practices to avoid the injection of secrets into docker image.

- The easy way is to pull the secret at the beginning of the layer and remove it at the end of the same layer, i.e. in a single Docker instruction:

RUN TOKEN=$(<pull token from a vault>) \

&& sed -i 's/GIT_TOKEN/${TOKEN}/' requirements.txt \

&& pip install -r requirements.txt \

&& rm requirements.txt- The official Docker recommendation is to use

RUNwith--mount=type=secretargument. This argument allows the creation of filesystem mounts that the build can access, mount typesecretallows accessing secure files without baking them into the image.

Сhallenge 4

After all these security adventures, James wants to keep the image as secure as possible. He looked at the COPY . . instruction and started to fear that unnecessary files might be copied to the image, and sensitive ones among others.

He asked developers to copy only the required files. They changed the Dockerfile, and now it looks like this:

COPY app.py convertor.py input.csv shared/ images/ sql/ .It’s much more secure now, isn’t it?

Show answerAnswer

Yes. And much more complicated to maintain.

Now the developers will have to remember to add each new file and folder to the Dockerfile. The recommended best practice is to ask developers to put all the resources required for their app in a separate folder. However, it’s not always applicable. In our case, many resources were shared between different applications in this repo.

To avoid copying unwanted files into a docker image, James would be better off using a .dockerignore file. It allows specifying a pattern for files and folders that should be ignored by the Docker client when generating a build context.

Besides avoiding unintended secrets exposure, it also prevents Docker build cache invalidation. It happens often with system or IDE temporary files, like IntelliJ’s .idea of or Mac’s .DS_Store.

We usually are not aware that these files were changed, but the Docker client is.

Hide answerСhallenge 5

James is still eager for his idea to reduce the number of redundant layers. He remembered that the EXPOSE instruction in Dockerfile does not expose the ports indeed and serves mainly for documentation.

“It serves equally good as documentation when it is commented out”, – he thought.

So he updated the Dockerfile this way:

# EXPOSE 8081 8082 8083You probably already have a hunch that James is not the Docker Hero, but what could be wrong?

Show answerAnswer

Nothing but crashed unit tests. Developers were using

docker runwith --publish-all argument in their unit tests. This argument exposes all ports mentioned in EXPOSE Dockerfile instructions. After James’ last commit, there were no ports left to expose this way.

Afterword

What every James should know?

- Don’t optimize what’s already working unless you clearly understand the benefit and the outcome.

- After applying changes – always test everything.

I hope you enjoyed reading and learned something new, but even if you knew everything – you’ve just confirmed that you are the true Docker Hero!